An Exploration of Embodied Visual Exploration

Intro

How should a robot equipped with a camera scope out a new environment? Despite the progress thus far, many basic questions pertinent to this problem remain unanswered: (i) What does it mean for an agent to explore its environment well? (ii) Which methods work well, and under which assumptions and environmental settings? (iii) Where do current approaches fall short, and where might future work seek to improve? Seeking answers to these questions, we first present a taxonomy for existing visual exploration algorithms and create a standard framework for benchmarking them. We then perform a thorough empirical study of the four leading paradigms with two photorealistic simulated 3D environments, a state-of-the-art exploration architecture, and diverse evaluation metrics. Our experimental results offer insights and suggest new performance metrics and baselines for future work in visual exploration. Code, models and data are publicly available.

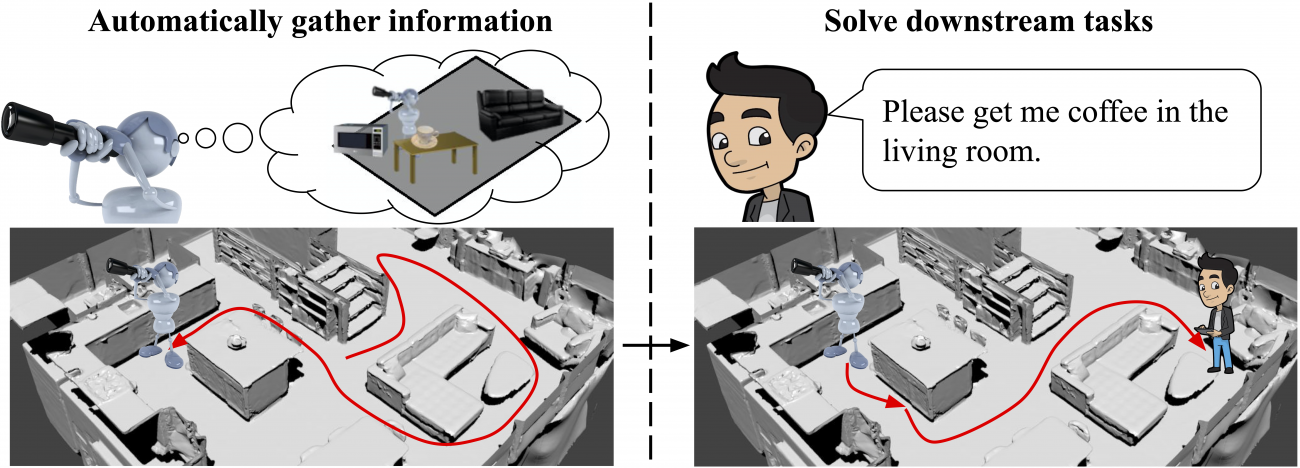

Embodied visual exploration

We focus on embodied visual exploration, where the goal is inherently open-ended and task-agnostic: how does an agent learn to move around in an environment to gather information that will be useful for a variety of tasks that it may have to perform in the future? Intelligent exploration in 3D environments is important as it allows unsupervised preparation for future tasks. Embodied exploration agents are flexible to deploy as they can use prior knowledge to autonomously gather useful information in the new environments. This allows them to prepare for unspecified downstream tasks in the new environment. For example, a newly deployed home-robot could prepare itself by automatically discovering rooms, corridors, and objects in the house. After this exploration stage, it could quickly adapt to instructions such as "Bring coffee from the kitchen to Steve in the living room."

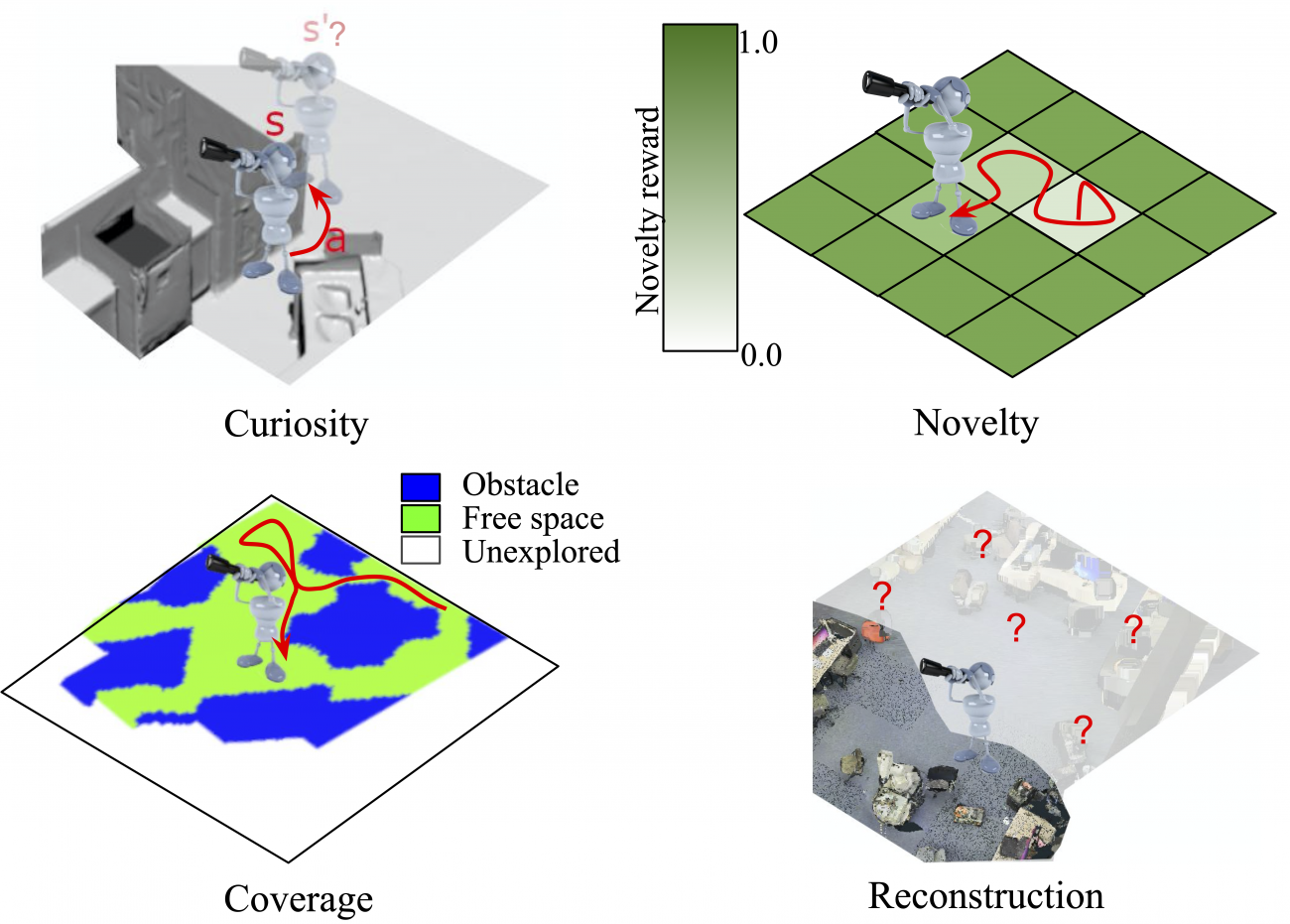

Taxonomy of exploration methods

We present a taxonomy of past approaches to embodied visual exploration by grouping them into four leading paradigms: curiosity, novelty, coverage, and reconstruction, shown schematically in the figure below. Curiosity rewards visiting states that are predicted poorly by the agent's forward-dynamics model. Novelty rewards visiting less frequently visited states. Coverage rewards visiting "all possible" parts of the environment. Reconstruction rewards visiting states that allow better reconstruction (hallucination) of the full environment.

Benchmark for embodied visual exploration

Despite the growing literature on embodied visual exploration, it has been hard to analyze what works when and why. This difficulty arises from the lack of standardization in the experimental conditions such as simulation environments, model architectures, learning algorithms, baselines, and evaluation metrics. To overcome these problems, we present a unified view of exploration algorithms for visually rich 3D environments, and a novel benchmark to understand their strengths and weaknesses. The benchmark uses two well-established photorealistic 3D datasets (Active Vision and Matterport3D), which we study using a state-of-the-art neural architecture. We also develop diverse evaluation metrics based on mapping and object discovery performance during exploration, as well as transferability of exploration behavior to various downstream tasks. We publicly release the code for our benchmark.

Experimental analysis of exploration methods

We sample representative learning-based exploration methods, together with some more traditional heuristic approaches and study their performance on our benchmark. After extensively evaluating these exploration, we highlight their strengths and weaknesses, and identify key factors for learning good exploration policies. Please see our paper for the results of our study.

ECCV workshop talk

We presented our work at the 4D vision workshop held in conjunction with ECCV 2020.

Supplementary video

Citation

@article {ramakrishnan2021exploration,

author = {Ramakrishnan, Santhosh K. and Jayaraman, Dinesh and Grauman, Kristen},

title = {An Exploration of Embodied Visual Exploration},

year = {2021},

doi = {10.1007/s11263-021-01437-z},

URL = {https://doi.org/10.1007/s11263-021-01437-z},

journal = {International Journal of Computer Vision}

}

Acknowledgements

UT Austin is supported in part by the IFML NSF AI Institute, DARPA Lifelong Learning Machines and the GCP Research Credits Program.