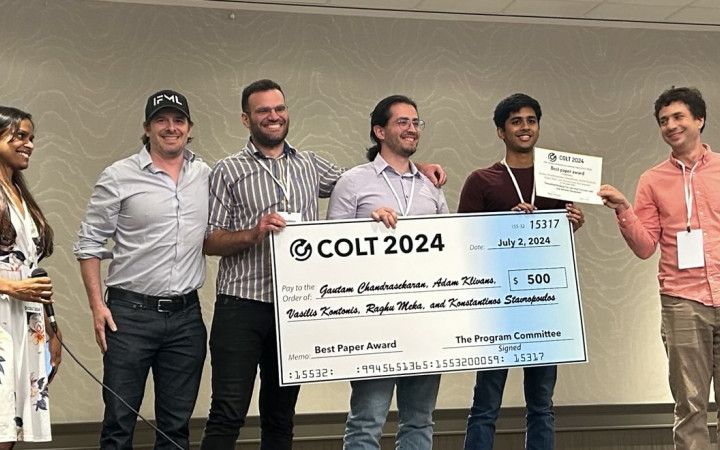

Smoothed Analysis for Learning Concepts with Low Intrinsic Dimension earns Best Paper Award at COLT 2024!

Authored by IFML Director Adam Klivans, students Gautam Chandrasekaran, Konstantinos Stavropoulos, IFML postdoc Vasilis Kontonis, and former UT CS PhD Raghu Meka

Congratulations to IFML Director Adam Klivans, students Gautam Chandrasekaran, Konstantinos Stavropoulos, IFML postdoc Vasilis Kontonis, and former UT CS PhD Raghu Meka on earning a Best Paper Award at the 37th Annual Conference on Learning Theory (COLT 2024) for their paper "Smoothed Analysis for Learning Concepts with Low Intrinsic Dimension."

Abstract: In the well-studied agnostic model of learning, the goal of a learner– given examples from an arbitrary joint distribution on Rd × {±1}– is to output a hypothesis that is competitive (to within ϵ) of the best fitting concept from some class. In order to escape strong hardness results for learning even simple concept classes in this model, we introduce a smoothed analysis framework where we require a learner to compete only with the best classifier that is robust to small random Gaussian

perturbation.

This subtle change allows us to give a wide array of learning results for any concept that (1) depends on a low-dimensional subspace (aka multi-index model) and (2) has a bounded Gaussian surface area. This class includes functions of halfspaces and (low-dimensional) convex sets, cases that are only known to be learnable in non-smoothed settings with respect to highly structured distributions such as Gaussians.

Perhaps surprisingly, our analysis also yields new results for traditional non-smoothed frameworks such as learning with margin. In particular, we obtain the first algorithm for agnostically learning intersections of k-halfspaces in time kpoly( log k

ϵγ ) where γ is the margin parameter. Before our work, the best-known runtime was exponential in k (Arriaga and Vempala, 1999a).