Events

IFML Seminar

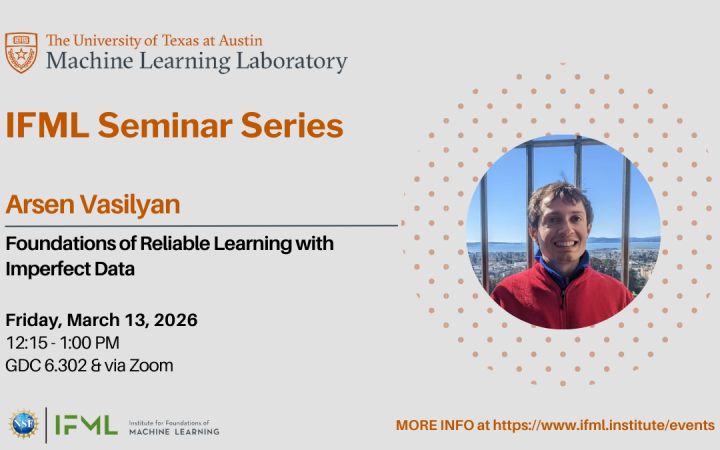

IFML Seminar: 03/13/26 - Foundations of Reliable Learning with Imperfect Data

Arsen Vasilyan, postdoctoral fellow at the Institute for Foundations of Machine Learning (IFML)

-The University of Texas at Austin

Gates Dell Complex (GDC 6.302)

2317 Speedway

Austin, TX 78712

United States

Abstract: A central challenge in machine learning is reliability: ensuring that an algorithm’s predictions remain accurate and stable even when the data is noisy, adversarial, or misspecified. This talk presents recent progress on learning algorithms whose robustness is grounded in provable guarantees.

The first part of the talk introduces a framework for assumption-aware learning—algorithms that explicitly recognize when their modeling assumptions fail. Rather than always producing a prediction, these learners may respond with “I don’t know” when the conditions required for correctness are violated. We formalize this paradigm as testable learning, which extends the classical framework of agnostic learning. In agnostic learning, the goal is to achieve near-optimal accuracy even when the target hypothesis class does not perfectly explain the data.

The second part of the talk focuses on supervised learning in the strong contamination model, where an adversary may arbitrarily corrupt a small fraction of the training examples, including both labels and features. While robust learning is well understood in unsupervised settings, general-purpose efficient algorithms for supervised learning under such adversarial noise have remained elusive. We present the first general-purpose efficient algorithm of this kind, applicable to a broad family of hypothesis classes. As a highlight, we show that constant-depth Boolean circuits can be learned efficiently under strong contamination, with running time matching the classical algorithm of Lineal, Mansour, and Nisan. This result resolves a 30-year-old open problem and demonstrates that reliability under adversarial corruption is compatible with optimal computational efficiency.

Talk based on joint work with Gautam Chandrasekaran, Surbhi Goel, Aravind Gollakota, Adam R. Klivans, Ronitt Rubinfeld, Abhishek Shetty, Konstantinos Stavropoulos and Kevin Tian.

Bio: Arsen Vasilyan is a postdoctoral fellow at the Institute for Foundations of Machine Learning. His research interests lie in Learning Theory, Sublinear Algorithms and the interplay between these two fields. Arsen was previously a research fellow at The Simons Institute for the Theory of Computing. Prior to that, he received a PhD from Massachusetts Institute of Technology, advised by Jonathan Kelner and Ronitt Rubinfeld.

Zoom link: https://utexas.zoom.us/j/84254847215